1. Background

A traditional voice assessment is based on perceptual analysis. However, objective (acoustic) measurements are an important aspect of a voice assessment. Acoustic analyses are recommended to provide objective data, more accurate diagnosis, and document the effectiveness of articulation therapy (1, 2).

The acoustic features of the voice are related to the function of the auditory system. The auditory system acts as an important sensory component so that speech output is received again as an input through acoustic feedback (3). Hearing is one of the most important factors that effects voice because it provides the main feedback for articulatory control (4) and influences moment-to-moment and delayed control of speech (5, 6). A normal auditory feedback system is important for controlling and monitoring aspects of speech, including voice, articulation, and fluency (7).

Individuals with hearing loss (HL) due to defects in auditory input are unable to develop the appropriate motor control needed for phonation mechanisms and speech production (4, 5). These impairments (such as imprecise vowel and consonant production) can effect the acoustic characteristics of voice and the intelligibility of speech (4, 8, 9).

Vowels are a critical factor for intelligibility of speech (10-12) and are involved in both prosodic and segmental features of speech (13, 14). The corner vowels (“a,” “i,” and “u”) are more important than other vowels, because they represent a wide range of production situations of the tongue (15). Persian vowels are divided to 2 groups, based on the position of the tongue (front vs. back): front vowels include “i”, “e”, and “æ”, and back vowels include “u”, “o”, and “a”. Vowels, based on tongue height, are divided to 3 groups, high (“i” and “u”), low (“æ” and “a”), and mid-high (“e” and “o”) (16). According to Ansarin (2004), these 6 vowels are symmetrically distributed in a vowel space plot so that they support the efficiency of the vowels “i,” “a,” and “u” as the major means of communication in the majority of world languages (17).

Formant frequencies can be considered important acoustic parameters in the assessment of vowels. The quality and differences between vowels can be determined with formants (10, 18). The F1 and F2 are the most relevant acoustic variables for vowel production and perception as well as differentiation and identification of vowels (19).

The F1 and F2 are related to tongue height (open versus closed) and tongue advancement (front versus back), respectively (20). F1 decreases when the tongue is elevated (e.g. to form vowels “i” and “u”) and increases when the tongue is lowered alone or in concert with a downward movement of the jaw (e.g. to form the vowel “a”) (10, 20). F2 increases as the tongue moves forward (e.g., to form the vowel “i”) and decreases as the tongue moves backward (e.g., to form the vowels “a” and “u”) (10, 20). Any deviations or impairments in the vocal tract, which effect tongue height, tongue advancement, or the lip rounding, could cause changes in the formants (21).

Acoustic analysis of vowels in HL speakers have shown decreases in the ranges of F1 and F2 (5, 22) that result in decreased vowel qualities, greater overlap of vowel areas, and a tendency toward “schwa” vowel (5, 23-27). This reduced differentiation of vowels in HL speakers has been related to restricted movements of tongue, mandible depression, tongue retraction, and neutralization of tongue position (27-30). In addition, tongue movements are limited in the vertical and horizontal dimensions and the tongue arc is reduced, which results in poor differentiation of the vowels and vowel formant centralization (5, 22-25).

The values of F1 and F2 in HL speakers are different from those of hearing speakers. For example, Nicolaidis et al. (2007) evaluated F1 and F2 in 6 Greek speakers with profound HL and 6 normal hearing speakers. Their results demonstrated a reduction of the vowel space, less vowel differentiation, and more centralized vowel space for the HL speakers (23). Moreover, Ozbic et al. (2010) examined the differences in vowel formant values between 32 hearing speakers, 14 speakers with severe HL, and 25 speakers with profound HL. They found several differences in formant values (except for the F1 of open “e” and “a”) and confirmed that vowel production in speakers with HL is different from hearing speakers (10).

2. Objectives

There is a lack of comprehensive information about F1 and F2 across different degrees of HL. Furthermore, previous studies have analyzed formant frequencies only in pediatric or adult speakers with profound or severe HL with small sample sizes. Therefore, the purpose of this study was to evaluate the F1 and F2 of the “a”, “u”, and “i” vowels in children (7 to 9 years old) with varying degrees of hearing loss (moderate, moderate to severe, severe, and profound) and compare them with normal children.

3. Methods

3.1. Participants

This research studied 40 bilateral prelingual HL children aged 7 to 9 years old, including 10 with moderate hearing loss (MHL), 7 with moderate to severe hearing loss (M.SHL), 8 with severe hearing loss (SHL), and 15 children with profound hearing loss (PHL). The mean hearing loss for the MHL, M.SHL, SHL, and PHL groups were 43.68 ± 14.63 dBHL, 60.41 ± 10.02 dBHL, 80.81 ± 16.23 dBHL, and 103.34 ± 7.14 dBHL, respectively. All subjects were selected from schools for children with hearing loss in Tehran, Iran, with a convenience sampling method. They had all been fitted with hearing aids, and all children and their families were monolingual and native speakers of Persian language. The hearing sensitivity of the HL subjects was evaluated on the basis of pure tone average assessment in frequencies of 500, 1000, and 2000 Hz. According to this assessment, the children with mean hearing threshold between 25 to 55 dB, 56 to 70 dB, 71 to 90 dB, and more than 90 dB were considered as the MHL, M.SHL, SHL, and PHL groups, respectively. This research also studied 40 age-and gender-matched peers without HL that comprised of the healthy control (HC) group. An informed consent was obtained from all participants and also the study was approved by the ethical committee of Tehran University of Medical Sciences.

Inclusion and exclusion criteria included: being monolingual and native speakers of Persian language, having no accompanying developmental disorders, no comorbid conditions including neuromuscular diseases (e.g., dysarthria, dyspraxia), mental disabilities, sever mandible-dental problems, restriction in tongue movement, evidences of a current respiratory infection, or dyslexia, and other voice disorders (according to reports provided by a neurologist, speech and language pathologist, teachers, and medical profiles).

3.2. Data Collection

All participants’ voices were recorded during 3 separate sustains of the vowels “a”, “i” and “u” in a comfortable and habitual pitch and loudness. Before recording, all participants were instructed to practice and familiarize themselves with the task. The duration of each recording was approximately 5 seconds. The recordings were perceptually monitored by the first and third author, and the participants were asked to sustain the vowel again if the first and third author determined they had not sustained the vowels in their natural and habitual pitch and loudness.

Acoustic data were collected using a head-mounted condenser microphone (AKG C410) positioned at a distance of 6 cm from the speaker’s lips and recorded to an external audio interface (TASCAM US-122 mkII) at a sampling rate of 44.1 kHz. The speech samples were collected in a quiet room. To obtain the formant frequencies, after the removal of 500 milliseconds from “onset” of each signal, 3 seconds of the signal was selected and analyzed using the PRAAT software version 5.3.13. In the present study, the sustained vowel was selected as a vocal task due to several reasons. Firstly, sustained vowels provide a stable method for acoustic analysis, limit vocal tract modification, and decrease co-articulation effects of speech and prevent interference with the prosody of speech (31). In addition, the spectrum with the best match to automatically obtainable results for the data analysis was used for determining specific and accurate formant values. In this way, the spectrum and automatically obtained results were used to cross-check each other. The F1 and F2 parameters calculated by this software were shown both graphically and numerically.

3.3. Data Analysis

The data were analyzed using SPSS version 16 software. The data were described using descriptive statistics such as mean, standard deviation, and maximum and minimum values of the formant frequencies for each of the different groups. Normality of the data was checked and approved using the Kolmogorov-Smirnov test (P > 0.05), moreover, homoscedasticity was assessed and approved using the Levene test (P = 0.34). The one-way ANOVA test was used to examine differences in the formant frequency between different groups and Dunnett’s post hoc test was used to determine differences between the HC group and each of the HL groups. The significance level was set at α = 0.05.

4. Results

In this study, 80 subjects including 40 children with normal hearing and 40 with different degrees of hearing loss were examined. Table 1 illustrates the demographic features of the groups.

| Participants | Number | Male | Female | Mean Age ± Standard Deviation |

|---|---|---|---|---|

| Moderate hearing impairment | 10 | 8 | 2 | 8.5 ± 0.6 |

| Moderate to severe hearing impairment | 7 | 2 | 5 | 8.9 ± 0.5 |

| Severe hearing impairment | 8 | 4 | 4 | 8.3 ± 0.8 |

| Profound hearing impairment | 15 | 8 | 7 | 8.4 ± 0.8 |

| Control group | 40 | 22 | 18 | 8.4 ± 0.8 |

The mean, standard deviation, minimum and maximum of the F1 and F2 for vowels of /a/, /i/ and /u/ are presented in Table 2.

| Vowel | Formants | Groups | P (ANOVA) | |||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Control | Moderate | Moderate-Severe | Severe | Profound | ||||||||||||||||||

| Mean | SD | Min | Max | Mean | SD | Min | Max | Mean | SD | Min | Max | Mean | SD | Min | Max | Mean | SD | Min | Max | |||

| /i/ | F1 | 390 | 103 | 292 | 930 | 464 | 181 | 304 | 918 | 401 | 80 | 292 | 498 | 447 | 129 | 312 | 680 | 552 | 141 | 361 | 801 | 0.001 |

| F2 | 2401 | 365 | 1360 | 3006 | 1927 | 461 | 1287 | 2570 | 1690 | 403 | 1157 | 2396 | 1766 | 462 | 1022 | 2396 | 1676 | 412 | 1190 | 2775 | 0.000 | |

| /a/ | F1 | 761 | 103 | 441 | 1038 | 836 | 147 | 637 | 1114 | 881 | 99 | 753 | 1017 | 856 | 92 | 740 | 1008 | 851 | 145 | 609 | 1063 | 0.015 |

| F2 | 1270 | 158 | 954 | 1553 | 1456 | 254 | 1084 | 1955 | 1506 | 241 | 1247 | 1942 | 1483 | 160 | 1293 | 1748 | 1605 | 226 | 1164 | 1940 | 0.000 | |

| /u/ | F1 | 433 | 70 | 306 | 646 | 387 | 57 | 294 | 460 | 470 | 120 | 328 | 710 | 477 | 128 | 294 | 725 | 506 | 116 | 349 | 734 | 0.015 |

| F2 | 1044 | 210 | 642 | 1684 | 1150 | 295 | 845 | 1646 | 1114 | 234 | 883 | 1572 | 1247 | 162 | 1020 | 1490 | 1329 | 231 | 894 | 1670 | 0.001 | |

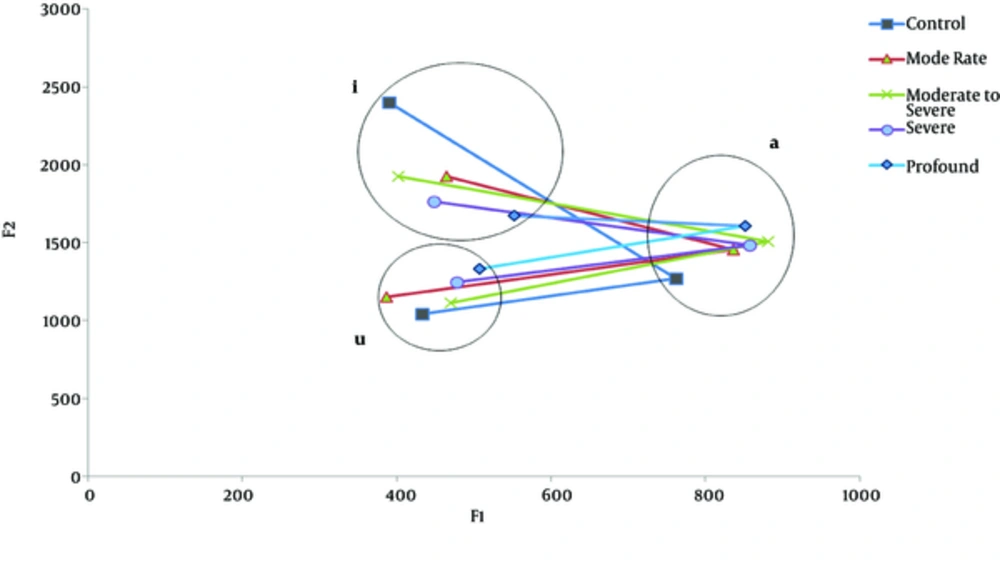

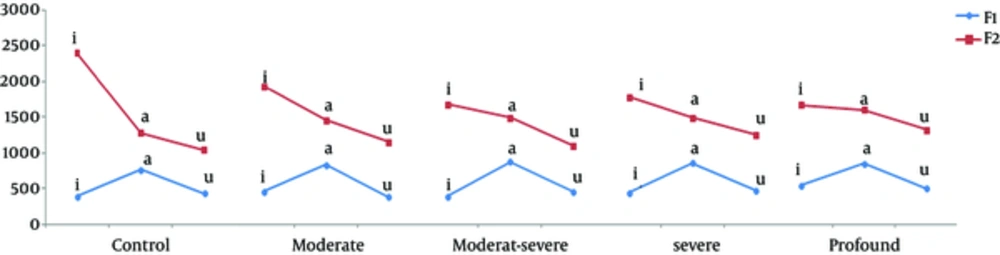

This study showed that in the HC group, the formant space was greater as compared to each of the HL groups. The degree of limitation of the vowel space in the HL groups seemed to be more prominent with increasing degree of hearing loss (Figure 1). In the HL groups, the F1 for all vowels was higher than the HC group (except for the first formant of the /u/ vowel in the MHL group); also, the F2 of /i/ that is normally high, was low for groups with HL and F2, which is normally lower for the back vowels of /u/ and /a/, was higher in all groups with HL (Figure 2).

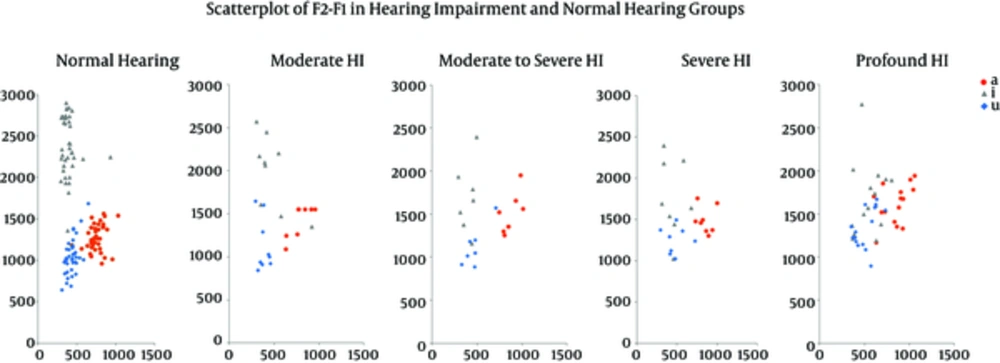

As it can be seen in Figure 3, the mean values of formant frequency in each vowel for the HC group was limited and specified, and the overlap between the vowels was very little and the vowel clouds for each of the 3 vowels in this group were distinct, while the expansion of formant clouds changed in accordance with the hearing loss level, so that with increase in degree of hearing loss (from moderate to profound), the overlapping of vowel clouds for vowels of /u/, /i/ and /a/ increased.

The ANOVA test showed that there was a significant difference among the 5 groups for F1 and F2 in vowels /a/, /i/ and /u/ (F1: P = 0.015, P = 0.001, P = 0.015, respectively, and F2: P = 0.0001, P = 0.0001, P = 0.001, respectively, see Table 2). To determine these differences, the Dunnett’s post hoc test showed that the first formant of each 3 vowels only had a significant difference between the HC and PHL group (Table 3). However, for the F2, the Dunnett’s test revealed that F2a (HC - MHL groups: P = 0.031, HC - M.SHL groups: P = 0.015, HC - SHL groups: P = 0.023 and HC - PHL groups: P = 0.0001) and F2i (HC - MHL groups: P = 0.005, HC - M.SHL groups: P = 0.0001, HC - SHL groups: P = 0.0001 and HC - PHL groups: P = 0.0001) were significantly different between the HC group and different degrees of HL groups. Also there was only a significant difference between the HC and PHL groups (P = 0.001) for F2u (Table 3).

| Level of Significance | |||||||

|---|---|---|---|---|---|---|---|

| F1a | F2a | F1i | F2i | F1u | F2u | ||

| Moderate | control | 0.255 | 0.031 | 0.311 | 0.005 | 0.473 | 0.543 |

| Moderate to severe | control | 0.055 | 0.015 | 0.999 | 0.0001 | 0.770 | 0.895 |

| Severe | control | 0.139 | 0.023 | 0.639 | 0.0001 | 0.586 | 0.083 |

| Profound | control | 0.048 | 0.0001 | 0.0001 | 0.0001 | 0.034 | 0.0001 |

5. Discussion

Children with hearing loss frequently participate in speech and language therapy sessions. Interventions for vocal patterns of these patients is important because their voice and articulation deviations could negatively impact their social and communication participation. Acoustic measurements allows for a more accurate diagnosis and quantitative analysis of changes in the voice and articulation, which can improve the effectiveness of the therapy designed to address associated disorders. The present study was designed to determine differences in the mean F1 and F2 formant frequencies of vowels “a”, “i”, and “u” in children with different degrees of hearing loss.

The values of the first and second formants of vowels in people with hearing loss are different from those with normal hearing (10). It has been reported by many studies that there is little distinction between different vowels in individuals with hearing loss and their vowel space has been centralized (23, 25). As Tye-Murray (1991) mentioned, there is a tendency towards similar tongue movements for all vowels in speakers with hearing loss (32), which leads to limited formant spacing. In the current study, the results have demonstrated a reduction in the ranges of F1 and F2 in the hearing loss groups and that the values are different from healthy controls. Furthermore, an overlap of vowel areas was observed in the hearing loss groups. Moreover, with increasing degrees of hearing loss, vowel production converged towards a central location, and as a result, vowel space was reduced (Figures 2 and 3). These results indicate that with an increase in the degree of hearing loss, tongue movements become more limited, which can lead to a lack of distinction between the vowels. According to Monson (1976), reduction in the distinction between the vowels in individuals with hearing loss could be attributed to inappropriate vowel production due to an absence of appropriate auditory feedback and the relative invisibility of required gestures for producing vowels (22). In addition, similar production of vowels could be related to the positioning of the tongue in the mouth. According to Dagenais (1992), the tongue position in people with hearing loss is in the middle range of positions that are being used by normal individuals. Individuals with normal hearing, use different forms of the tongue to produce different vowels, while individuals with hearing loss use a flat tongue shape that is sloped downward from high-back position (24).

The results of this study demonstrate that F2i and F2a (unlike F2u, F1a, F1i, and F1u) are significantly different between groups with different degrees of hearing loss (MHL, M.SHL, SHL, and PHL) and the HC group. As mentioned previously the degree of vowel opening associated with the lowering of the tongue and of the mandible, has a direct relationship with the frequency for F1, which increases with mouth opening. Therefore, analysis of the F1 formant revealed that the mean values were mainly high in the HL groups for the back (“a” and “u”) and upper-front (“i”) vowels compared with the control group. This may be related to the limited vertical movement of the tongue in the oral cavity of children with HL. In contrast with the study by Schenk et al. (2003), the F1 in HL speakers (except for the PHL group) was not significantly different from that in the HC group, yet, the values of the F1 for all vowels (except for the F1u in the MHL group) were higher than that of the HC group (25). However, these differences may be due to a general inclusion criteria for “deaf,” whereas, the subjects of the current study were grouped according to different degrees of hearing loss. It may also be due to the use of context reading, whereas this study used sustained vowel for sample recording.

Furthermore, results related to the F2 indicated that the upper-front vowel “i” displayed a significant decrease and the back vowels (“u” and “a”) demonstrated an increase (particularly for “a”) in mean values for all HL groups compared with the HC group, which may be due to a limited range of tongue movement in the high-back position. According to Nicolaidis and Sfakiannaki (2007) and McCaffrey and Sussman (1994), higher frequencies of F2 tend to be affected by a greater degree, as hearing sensitivity is greatly reduced above 1000 Hz for individuals with hearing impairments (23, 26). Therefore, more errors occur in the high and front vowels when compared with low and back vowels. The high frequency and low-intensity F2 formants of the high vowels were more affected than the lower frequency vowels and more intense F2 formants of the back vowels. In particular, the shift to non-standard frequencies of F2 was greater in front high vowels than in back vowels. In addition, according to McCaffrey and Sussman (1994), it is expected that people with hearing impairments have more difficulty in perceiving higher and less audible formants than lower more audible formants.

Kewley-port (2007) suggested that the recognition threshold of the second formant of vowels in speakers with hearing loss is higher and their performance is poor in distinguishing vowel formants (33). Moreover, McCaffrey and Sussman reported that in people with hearing loss, the ability to hear the second formant was lower than the first formant (26). Thus, it is expected for the second formant to be more affected in these individuals. In the current study, the results also showed that the second formant is more affected than the first formant. This may be due to the fact that F2 relies heavily on tongue placement and this feature has less visibility compared to F1, which is mostly controlled by jaw opening and tongue height that has high visibility for speakers with hearing loss (10, 22, 23, 26). However, these greater differences in the F2 values may also be caused by reduced hearing sensitivity at higher frequencies. Other possible reasons include limitation in the range of tongue movements and relative invisibility of articulatory gestures that are needed for vowel production along the front-back dimension in the oral cavity. These issues in speakers with hearing loss could be due to overlapping vowel areas and a tendency towards Schwa vowels and vowels centralization (5, 22-26).

5.1. Conclusions

The results of this study showed that in vowel production, children with hearing loss were different from hearing children and could be distinguished based on formant frequency (particularly F2 “i” and “a”). These results also indicated that vowel production in the profound hearing loss group was significantly different from the normal hearing group. In individuals with hearing loss, the vowels had a tendency to be converted to the Schwa vowel; in other words, depending on the hearing loss level, these individuals tended to produce similar-sounding vowels. The second formant was affected more than the first formant, which may be due to less visibility of the second formant than the first formant and difficulty to learn using the visual sense.

A possible limitation of this study was the unequal sample size of the groups. Utilizing equal sample sizes in different groups would increase the power of the study and precision of the estimates. Although there was no gender effect in the present study, selecting equal sample sizes according to gender should be considered by future studies. Another limitation was the duration of speech therapy and auditory training sessions before the study, which could not be controlled. In future studies, it would be important to examine the connection between formants and speech intelligibility, while further spectral analysis, including more formants or ratios of formants, is also suggested.